Why your heritage has never been truly safe

By Sébastien Krajka, Heritage Coordinator at AWA

Like some of the readers of this article, I work in cultural preservation.

So… most of my days are spent thinking about what should last and more importantly, how it actually survives over time.

From physical documents stored in controlled environments to petabytes of data managed across storage systems, formats, and standards, I believed that once something was properly preserved, catalogued, digitized, and stored with redundancy, it was, to some extent, safe.

We’ve even created some established practices like the 3-2-1 backup rule: multiple copies, different media, one stored offsite… reducing risks…

But now I know… this… mainly relies on assumptions: that systems will remain operational, that formats will stay readable, and that offsites will ever be safe.

And those assumptions don’t always hold.

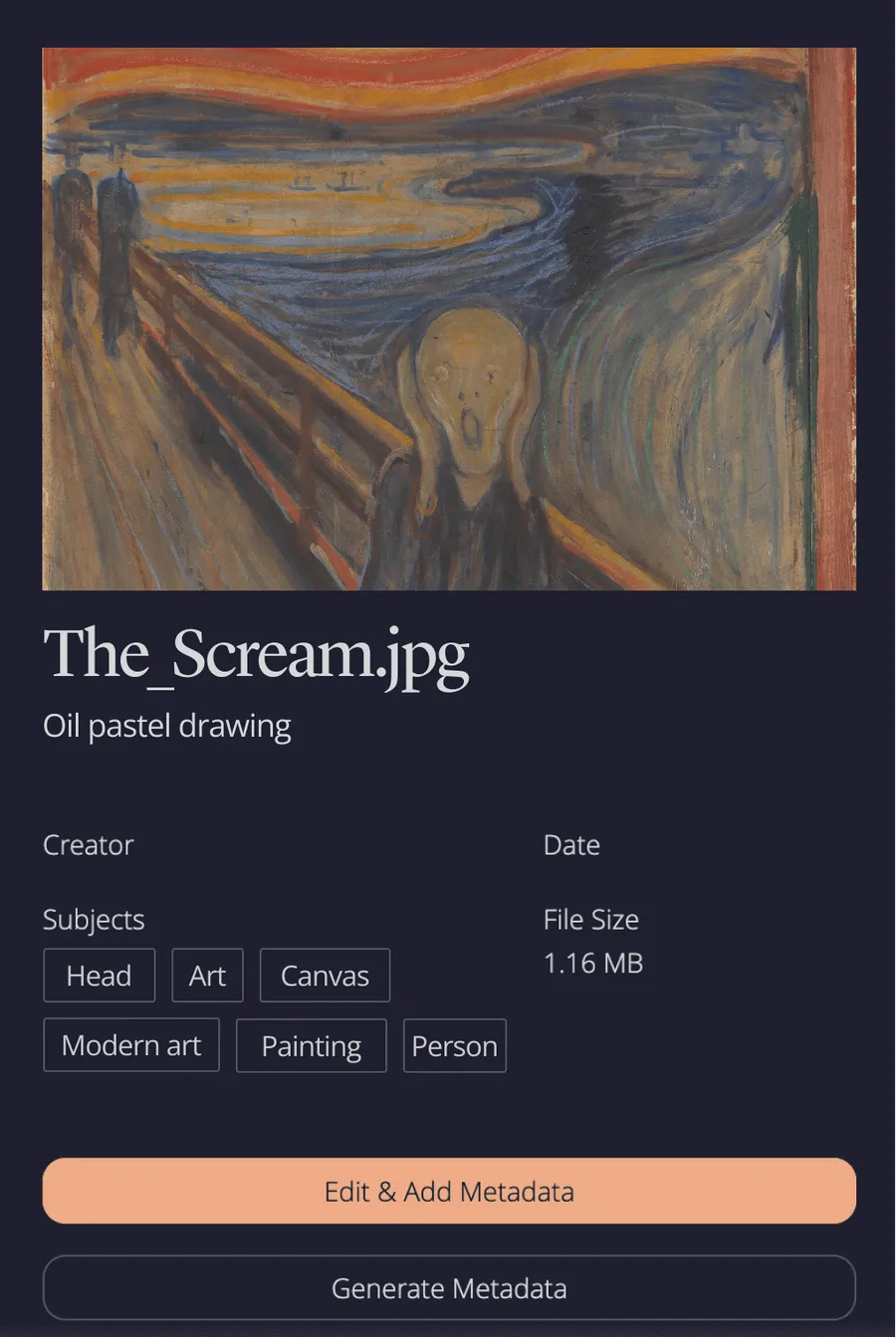

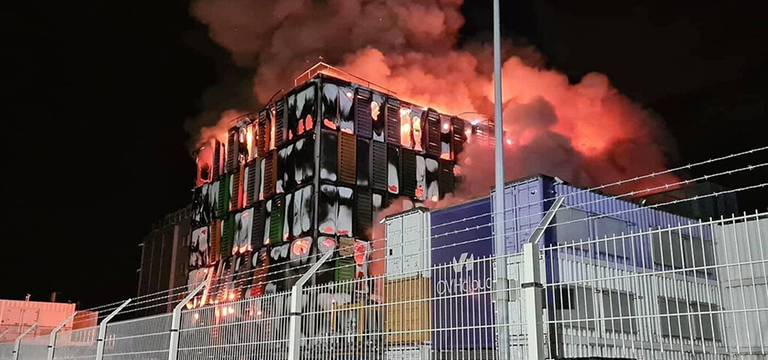

OVH servers burning in Strasbourg (France), 2021

When you look closely at how heritage, especially digital heritage, actually survives, you start to see how many layers it depends on:

- File formats that need to remain readable.

- Hardware that needs to function.

- Software environments that need to be maintained.

- Institutions that need to exist, operate, and be funded.

- And, above all, a broader geopolitical and environmental context that needs to remain stable.

And that’s where the assumption starts to break.

Because none of these layers are guaranteed.

Not in the long term.

Not at the scale we often assume.

Even at the highest level, institutions like UNESCO constantly remind us that cultural heritage is exposed to both human and natural threats, from armed conflict to environmental disasters.

And in a world that is becoming increasingly unstable in 2026, that fragility is no longer theoretical.

War in Iran (2026), Bilal Hussein/AP

Which leads me to a realization I’ve come back to more and more often:

Cultural heritage, including the data we preserve, is not inherently secure but fragile by nature.

What are the main data threats today?

When I talk about data threats, I’m not just referring to files, systems, or storage.

I’m talking about everything that data represents: archives, records, knowledge. In other words, what we usually call “heritage”.

From what I have seen, data risks have become more complex and deeply rooted. We are dealing with large-scale, systematic challenges that are often underestimated until it is too late.

Most of them fall into five main categories:

- War and geopolitical instability: destruction of infrastructure, loss of control, restricted access to data.

- Climate and environmental damage: floods, humidity, temperature variations affecting storage systems and physical media.

- Technological obsolescence: unreadable formats, outdated software, increasing dependency on legacy systems.

- Human neglect and institutional fragility: lack of funding, shifting priorities, interruptions in long-term data preservation strategies.

- Cyber and digital threats: cyberattacks, ransomware, data corruption, and unauthorized access compromising integrity and availability.

Each of these threats operates differently.

Some are sudden but others develop slowly over time.

But they all lead to the same outcome: data loss.

And when data is definitely lost, what disappears is not just information… it’s memory.

What does long-term preservation really require?

If data is exposed to this level of instability, then the way we think about preservation needs to evolve.

Because the more I work on these topics, the more I see that long-term data preservation is a question of design.

Most systems today are built to handle failure: redundancy, backups, replication.

They work.

But they still depend on environments that are assumed to remain stable.

And that’s the real limitation.

So the question becomes:

Not “How do we store data safely?” But “How do we ensure data survives over time, regardless of what happens around it?”

That’s the perspective we’ve been developing at Arctic World Archive.

Unlike traditional offsite storage, which still depends on power, software, and continuous maintenance, AWA reduces those dependencies:

Data is stored offline, on durable physical media (called “piqlFilm” - developped by norwegian company piql) similar to microfilms (but much more durable), in a remote and stable environment (a Svalbard mine pit).

A piece of piqlFilm and it’s creator, Rune Bjerkestrand

Not just to store it elsewhere.

But to make sure it can survive independently.

Because in the end, preservation is not about assuming stability.

It’s about designing for its absence.

If you’re exploring long-term data preservation strategies, feel free to reach out. I’m always happy to exchange on these topics.